A Facebook post by online news site Bilyonaryo discussing a possible leadership change at the Department of Information and Communications Technology (DICT) was quickly swarmed by suspected troll accounts posting coordinated comments defending DICT secretary Henry Aguda.

On Friday, March 13, Bilyonaryo posted an article on Facebook about former presidential spokesperson Edwin Lacierda being floated as a possible replacement for Aguda.

Less than an hour later, suspicious accounts began appearing in the comments section. From 4:22 p.m. to 4:36 p.m., 24 accounts posted comments within seconds of each other, dismissing the article as variations of “fake news.”

An examination of the accounts revealed several red flags commonly associated with coordinated inauthentic behavior. Many shared identical account creation dates, including March 30, July 5, July 15, August 25, September 22, and December 14 in 2023, while two others were created on August 24, 2024.

Several of the accounts also shared identical content, including a December 9, 2024 post by an account named นี่คือ ภาษาไทย ครับ (Jassy De Guzman in Thai) promoting a gambling app in exchange for money. Another frequently shared post advertised a teeth-whitening product dated February 1, 2024.

Accounts with visible friends lists were also connected to one another, sharing overlapping social networks — another hallmark of coordinated activity.

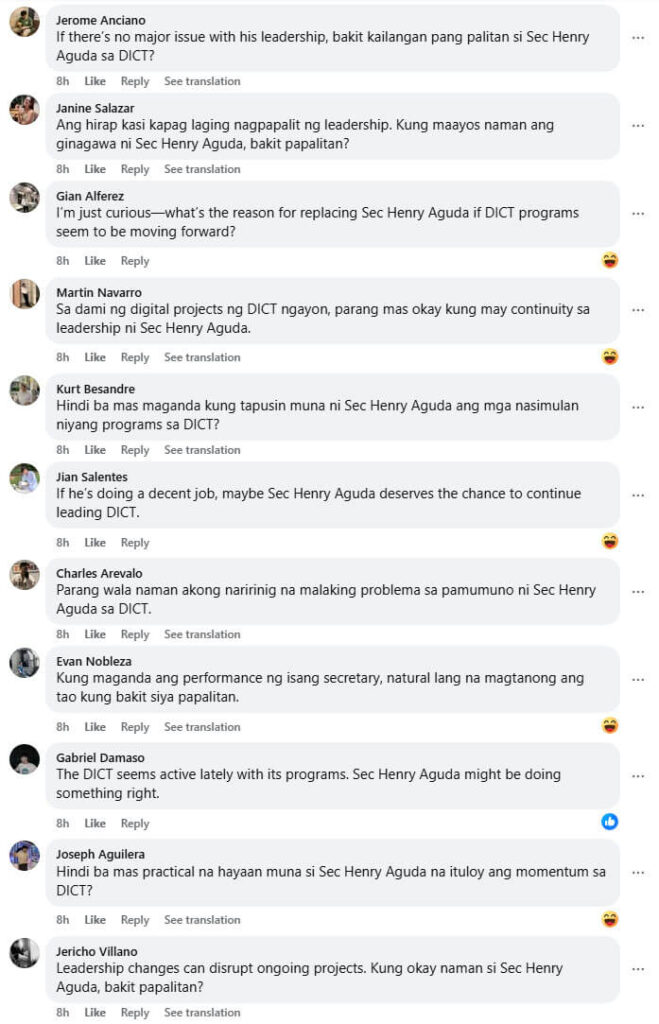

A second wave of suspicious comments appeared the following day, Saturday, March 14. Between 9:17 a.m. and 9:25 a.m., another 23 accounts posted comments in rapid succession.

Unlike the first wave that labeled the article “fake news,” the second wave shifted to pro-Aguda messaging, posting variations of arguments emphasizing the need for “continuity” in the DICT, questioning why Aguda should be replaced, and asserting that he is performing well as secretary.

The accounts in this second wave exhibited additional signs of inauthenticity. Many had missing cover photos, extremely low friend counts, minimal posting histories consisting largely of reshared inspirational quotes or generic content, little to no original media, and long periods of inactivity.

The differences between the two sets of accounts suggest that more than one troll network may have been involved in the comment surge.

Out of 62 commenter accounts examined, 47 displayed strong indicators of inauthenticity. The near-simultaneous posting patterns point to coordinated activity and possible automation.

Another indicator of coordination is that many of the accounts with visible friends lists were directly connected to one another or shared the same network of friends.

Taken together, these patterns strongly indicate coordinated inauthentic behavior (CIB) — a tactic often used to manipulate online discourse and manufacture the illusion of public support.

The speed and variation of the messaging also raise the possibility that artificial intelligence tools were used to generate the comments.

Similar tactics were documented in the operation dubbed “High Five,” identified by OpenAI, where accounts linked to Makati-based PR firm Comm&Sense used ChatGPT to generate large volumes of inauthentic comments in English and Taglish.

Experts warn that the shift from identical copy-paste scripts to AI-generated variations marks a new phase in digital influence operations. By mimicking the natural diversity of human language, these campaigns are becoming harder for automated moderation systems to detect.

As coordinated online influence operations grow more sophisticated, digital media and information literacy (MIL) is becoming an increasingly critical defense against the manipulation of public discourse.